From AI to autonomy: why Shankar Sastry focuses on trust

The UC Berkeley pioneer will open NCAS’26 with a keynote on one of the hardest questions in tech: how autonomous systems can safely operate.

Published on March 19, 2026

Bart, co-founder of Media52 and Professor of Journalism oversees IO+, events, and Laio. A journalist at heart, he keeps writing as many stories as possible.

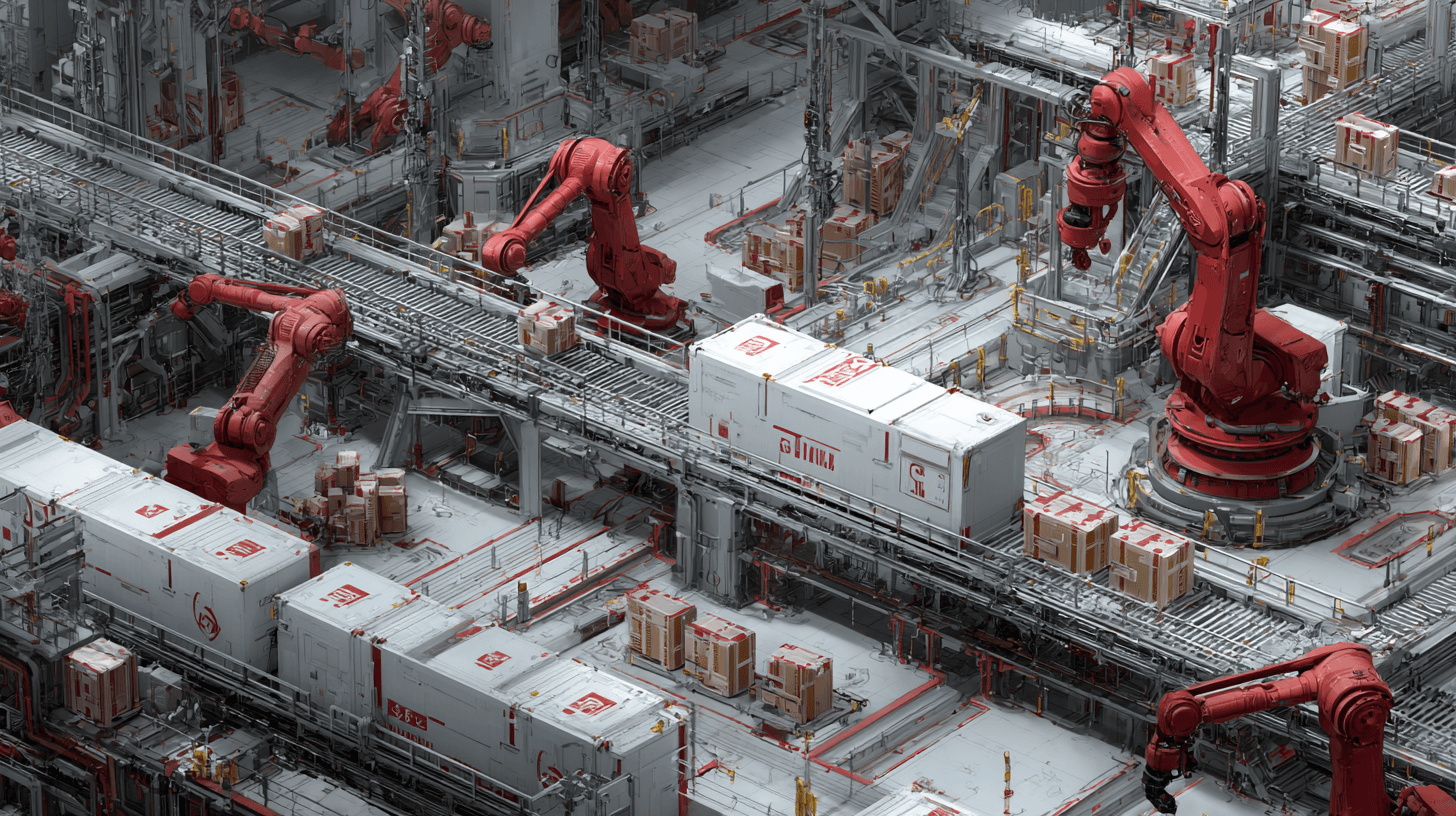

Autonomous systems are leaving the laboratory. Robots now assist surgeons, software steers vehicles, and intelligent systems increasingly manage industrial processes and infrastructure. But as artificial intelligence moves from controlled environments into messy real-world settings, one question becomes unavoidable: when do we trust a machine enough to let it act independently?

That question sits at the heart of Shankar Sastry's keynote lecture at the Nationaal Congres Autonomous Systems 2026, which takes place on April 2 in Drachten. His talk, titled “From AI to Autonomy: Building Trustworthy Systems for the Real World,” addresses the technological and societal challenge that increasingly defines the next phase of AI: reliability.

.png&w=2048&q=75)

Shankar Sastry

Sastry is well placed to address that challenge. Over several decades, the professor at the University of California, Berkeley has helped shape the scientific foundations behind cyber-physical systems, robotics, and autonomous technologies. His career traces the evolution from theoretical control systems to machines that interact directly with the physical world.

When intelligence meets reality

Artificial intelligence often appears effortless in demonstrations: models recognise images, predict patterns, or generate convincing text. But autonomy introduces a different level of complexity. An autonomous system must interpret uncertain sensor data, adapt to unpredictable environments and continue functioning safely even when something goes wrong.

In other words, autonomy requires more than intelligence. It requires engineering.

Sastry’s work has long focused on that intersection between algorithms and physical systems. His research spans embedded and autonomous software, robotics, computer vision, nonlinear control and hybrid systems: mathematical frameworks that describe systems combining digital logic with continuous physical behaviour.

That combination is exactly what defines modern autonomous machines: software making decisions while interacting with physical processes such as motion, forces, and energy.

At Berkeley, Sastry has also explored applications ranging from autonomous vehicles to robotic surgery and networked control systems. In those domains, the question is rarely whether an algorithm can perform a task. The real question is whether the system can do so reliably enough to be trusted with human lives, infrastructure, or critical operations.

From research to deployment

Sastry’s influence extends beyond academia. For several years, he served as Director of the Information Technology Office at DARPA, one of the world’s most influential research organizations for advanced technologies.

DARPA has historically played a pivotal role in pushing emerging technologies from theory toward practical deployment, particularly in areas such as networking, robotics and autonomous systems. Sastry’s time there exposed him to the operational challenges that arise when advanced technologies move beyond prototypes.

Later, he returned to Berkeley, where he served as chair of the Electrical Engineering and Computer Sciences department and subsequently as Dean of Engineering. In those roles, he helped shape one of the world’s most influential ecosystems for robotics, AI, and cyber-physical systems research.

Throughout that career, one theme repeatedly surfaces: the importance of systems thinking. Autonomous technologies do not emerge from a single algorithm or component. They require integration of hardware, software, sensors, communication networks, and human operators.

Trust as the missing ingredient

That systems perspective explains the focus of Sastry’s keynote in Drachten. For years, the public discussion around AI has centred on capabilities: larger models, faster computation, more data. But the deployment of autonomous systems raises different questions. Can the system explain why it made a decision? Will it remain safe when sensors fail or conditions change? Can humans intervene when something unexpected happens?

Trust, in this sense, is not a philosophical concept but an engineering requirement.

In industrial robotics, for example, safety mechanisms determine whether humans can work alongside machines. In autonomous driving, trust depends on how reliably systems interpret complex traffic situations. In healthcare, it depends on whether clinicians understand how a system supports their decisions.

Without that trust, even technically impressive systems struggle to move beyond controlled pilot projects.

Why the discussion matters now

The theme of this year’s congress (“From Lab to Life”) reflects the broader shift happening in the autonomous systems field. Increasingly, companies and governments are not asking whether autonomy will become viable, but how it can be deployed responsibly and at scale.

That shift is particularly relevant for regions with strong manufacturing and high-tech systems industries. Autonomous technologies promise gains in productivity, safety, and efficiency, but only if systems are robust enough for everyday operations.

Events such as NCAS aim to connect research, industry, and policymakers around exactly that transition. Sastry’s keynote, therefore, serves as both a technical and strategic reflection. It highlights the gap between impressive AI demonstrations and the much harder task of building systems that operate safely in unpredictable environments.

The long road from AI to autonomy

For Sastry, the road from AI to autonomy has always been about more than software. It is about the discipline required to turn intelligence into reliable systems.

That involves mathematics, engineering, and testing... but also humility. Real-world systems inevitably encounter situations that designers did not anticipate. Building trustworthy autonomy means designing systems that can handle those moments safely.

As autonomous technologies move deeper into industry, healthcare and infrastructure, that challenge will only grow. Sastry’s message in Drachten is therefore likely to resonate far beyond the conference itself. The future of autonomous systems will not be decided solely by how intelligent machines become, but by whether society can trust them to act.

And that trust must be engineered.

Anyone who wants to see how autonomous the Netherlands and Europe are becoming would do well to be in Drachten on April 2, and can already purchase tickets for the National Congress on Autonomous Systems.